From Hard to Easy: Changing Database Schema

5 minute read

I hate changing the database!

Legacy databases present two challenges:

- Bad schema that is hard to change.

- Too much logic in the database.

Both delay stories and generate bugs. Both waste your time and each will require thousands of person-hours to fix. We need to find a way to pay that cost incrementally; preferably divided among all the developers on the project.

First let’s divide the problem in half by ignoring the logic for now. After all, lots of logic in the database exists to simplify the schema for applications. We can delete that once the schema is good. So how do we fix the schema?

There are three common ways to change the schema: an upgrade script for the release, a tool that diffs two schemata and generates a custom script per database, and Ruby-on-Rails style migrations.

Each has problems:

- The upgrade script gets complex. It requires conditional logic to handle intermediate states over the course of the release. It gets hard to change, especially when multiple changes hit the same fields. Companies respond by limiting the amount of change per release.

- The tool is non-deterministic. The set of transformations that happen to the integration and development databases, over time, are not necessarily the same one as it will generate for production. This requires special testing for each production variation you have in the field. It also has to transform the data at the same time as the schema, which results in long downtime.

- Migrations work pretty well. But it is easy to end up with too much change in one migration and there is no systematic way to handle zero-downtime deployments.

We are want something like Lego® - simple, uniform blocks that have consistent connectors. We will start with migrations and then make them more uniform.

Refactor Your Database With Lego®

We will change database design the same way we recommend changing code design. We execute the change as a sequence of refactorings, each placed one at a time and stored individually in version control. In other words: stacking legos.

The complexity is that each database has an unique state: its data. We can’t just replace the old database with the new. We have to execute a deployment script that will place the legos (refactorings) for us, transforming the old database to have the new schema. It has to pick the right legos, or refactoring, for each unique database. Additionally, it has to handle all the real-world cases, which include massive database, zero downtime, and customers who skipped your last 3 updates but took a database-impacting security patch.

The key change is in the deployment script. We need to:

- Write it incrementally, one refactoring at a time.

- Allow updating the data asynchonously from changing the schema.

- Make sure it is deterministic, no matter what the starting version.

This [month’s recipe](This month’s recipe shows you how to achieve this change: ) shows you how to achieve this change:

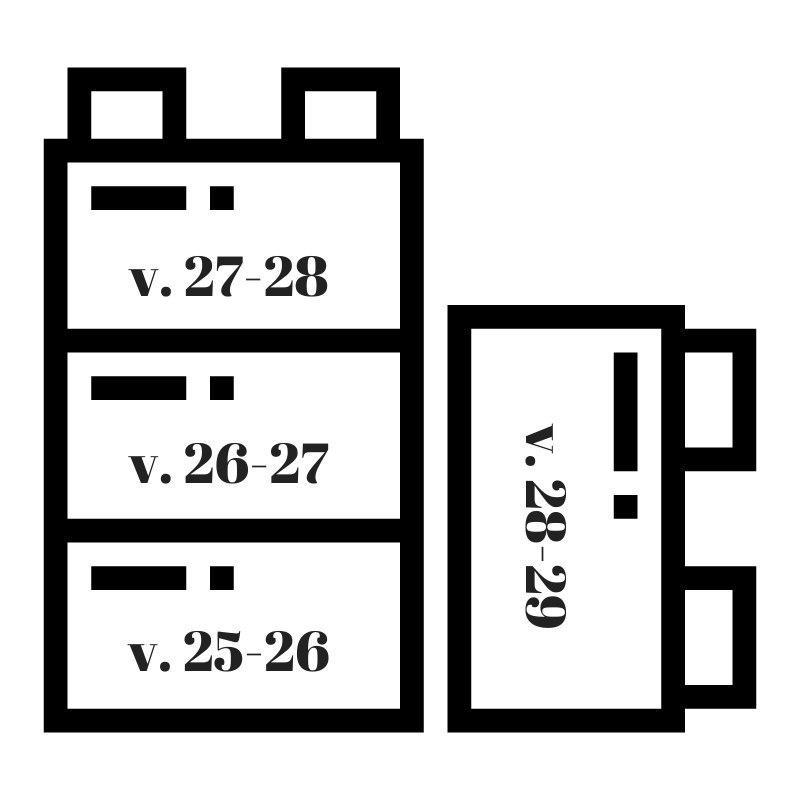

- Use 3-phase refactorings (start, update data, finish).

- Implement each refactoring as its own 3-part ‘../../post/database-schema’script, which takes the database from version N to version N+1 (and back again).

- Have each database store its current version range.

- Deploy a database upgrade by asking the database for its current version and then running the correct scripts, in order.

The result is deterministic transitions from any database version to any other, guaranteed to maintain existing data. It also allows background database processing and zero-downtime database deployments, regardless of database technology or size.

Access the recipe to [Refactor Databases](This month’s recipe shows you how to achieve this change: ) as well as other recipes coming in the future!

The Schema Will Gradually Improve

Once schema change is easy and safe, everyone will start making small improvements. Over time, the design problems will seem to melt away. Each person does a tiny fraction of the work, and every time you step away for a week you come back to a much simpler data design.

Additionally, the deployment will just work. If the change worked once on the developer’s machine, you know it will work exactly the same in every production deployment - no matter how much data they have or how many upgrades they have chosen to skip.

Benefits:

- Code and test a schema change in less than 15 minutes.

- Eliminate deployment bugs due to schema changes.

- Eliminate conflict between multiple changes in flight at once.

- Perform zero-downtime deployments.

Downsides:

- Small performance loss for a time after each update, as the database finishes its data transformation.

- Feels like a tool should be able to write the script for you, yet all tools end up creating bugs.

Demo the value to team and management…

Show two things at your sprint demo:

- Example: One migration script.

- Impact: Show a zero-downtime deployment with a migration that involves data update (such as merging two fields).

Example: Migration Script

Show the directory of migration scripts and the contents of one of them. Then show the final schema update script you had for your last release. Point out the conditional logic it had.

Explain that you will never again have that giant mess to maintain. Each change just requires adding one more file, which is much simpler and less error-prone to manage.

Impact: Zero-Downtime Deployment

To prepare, create a migration script that changes a lot of data. Follow it with another couple migrations.

First show zero-downtime data updates.

- Simulate a deployment start (steps 1-3 of the deployment process described in the recipe).

- Show that the deployment finishes with no downtime, even though the data is not updated.

- Show that the data is being updated in the background.

- Pause the data update before it finishes.

Now show data recovery on failed deployment.

- Update some data in your new fields.

- Do a deployment undo-start ( migrate action=migrate-top –to=prior).

- Show that you get exactly the original database - with the new data changes translated back.

Finally, show that a full deployment leaves no performance degradation.

- Do a full deployment.

- Wait for data migration and finish to run.

- Show that the database is in the final schema with no triggers or other possible performance problems.